Doing some research in the GPU segment to make a purchase decision can become a headache due to the various marketing terms used by different brands. If you’ve browsed the internet for a decent AMD graphics card, you might’ve seen terms like GCN, RDNA, etc.

So, what exactly does GCN mean in a GPU? And should you care about it or is it just a marketing buzzword?

Let’s find out.

What is GCN Architecture?

GCN is a graphics processing architecture launched by AMD in January 2012. The Graphics Core Next, or GCN for short, provides robust computational performance in both gaming and content creation. Not to mention, its scalable design is what made PlayStation 4 and Xbox One possible.

GCN architecture has been a mainstay for quite some time and has gone through many refinements & changes over time. From die shrinks to core components re-arrangements, let’s take a look at how GCN microarchitecture has evolved through time with each generation.

Read more on how to Lower GPU Temperature.

GCN 1

December 2011, it was a pivotal time for GPUs in general, as AMD announced its first GCN architecture-based GPU, the HD 7970. This 1st generation GCN product was also the first to support DX11.1. AMD’s Oland, Cape Verde, Tahiti, and Pitcairn series GPUs are comprised of this architecture.

In later years, the GCN 1 lineup also supported DX12 and Vulkan API thanks to their asynchronous compute. This microarchitecture was a major step-up compared to the previous VLIW as it solved the main issue of the previous gen, performance in compute-heavy workload.

This push in asynchronous compute with F32 and F64 Ops really paid off in the long run. It made GCN cards more futureproof than their competition’s(Nvidia) offering. Not to mention hardware-accelerated tesselation, partially resident textures, MLAA support along with many noteworthy features that were updated over time.

Some of the notable GCN 1st generation graphics cards are HD 7730, R9 280 and 280x, HD 7950 GHz edition, etc.

Despite being a powerhouse with improved efficiency, it still lacked in many aspects, software-wise maturity being one of them. And considering the added pressure from the competition, it was time for a new GCN generation.

Also related to this guide can Motherboard Bottleneck GPU.

GCN 2

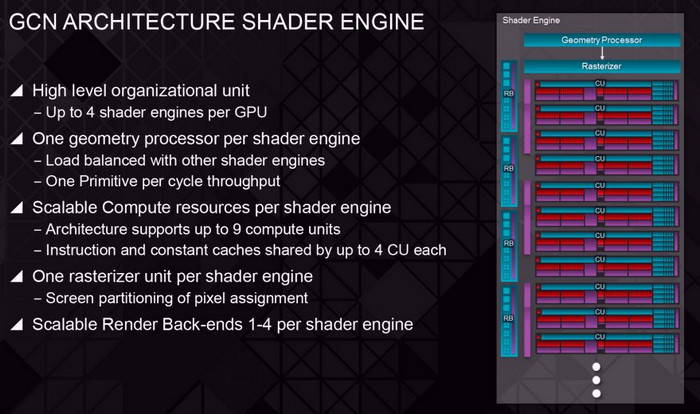

March 2013, the optimization phase for GCN architecture begins. With a slew of newly added features and revamped shader engine, the GCN 2 promised to improve upon every aspect of the previous gen. This architecture was seen in AMD’s Bonaire and Hawaii series GPUs.

Not to mention the improved cache and modified ALU design helped a lot to increase the overall tesselation performance. The memory subsystem was coupled with ROP to provide an added boost in compute workloads and gaming. The number of ROPs(render output units) also increased dramatically.

The most noteworthy achievement of GCN2 architecture is its implementation in PlayStation 4 and Xbox One console lineup. PS4, PS4 Slim, PS4 Pro, and Xbox One X, all use the GCN2-based iGPU for rendering video games.

2nd generation GCN architecture also ushered in FreeSync support for high refresh rate gaming monitors.

Some of the most popular GCN 2 architecture-based GPUs were AMD HD 7790, R7 260, R7 260X, R9 290, R9 290X, R9 390, and R9 390X.

GCN 3

The 3rd gen GCN architecture(GCN 3) provided the biggest addition to the entire architecture, the Lossless Delta Colour Compression technology. This essentially increases the GPU bandwidth by around 20%.

This allowed for improved power efficiency, better-fed ROPs, and a much more efficient memory bus.

Not only that, improved caching and tesselation in GCN 3 architecture provided a huge performance uplift in many compute-heavy scenarios, such as God Rays simulation, Ambient Occlusion, etc.

Fiji and Tonga AMD GPUs were manufactured based on this 3rd gen GCN architecture. These GPUs are R9 285, R9 380, R9 380X, R9 Fury, R9 Fury X, R9 Nano, and R9 Fury Pro Duo.

GCN3’s most notable achievement, using HBM VRAM on a consumer-grade GPU, was first debuted with AMD Fury X. This effectively introduced unprecedented raw bandwidth, lower latency, and much-improved power efficiency compared to traditional GDDR VRAM.

GCN 4

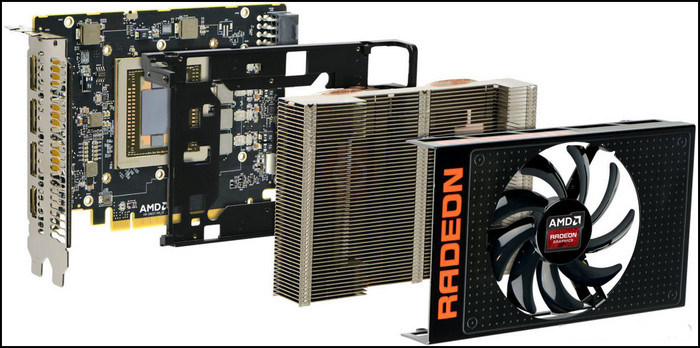

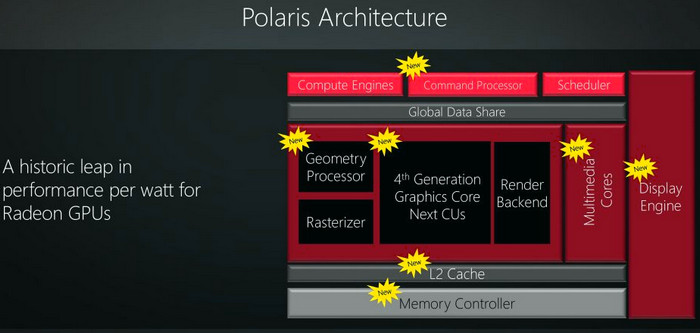

GCN 4 saw a huge leap in performance and architectural advancement compared to previous generations.

On GCN’s fourth iteration, AMD entirely revamped the architectural design from the scratch. This allowed them to implement newer computational techniques that were much needed to compete against Nvidia’s Pascal lineup.

Polaris 10, 11, 12, and 20 series AMD GPUs(Radeon RX 400 and 500 SKU) were manufactured on this 4th gen GCN architecture.

GCN 4 not only improved the Delta Color Compression system, but it also added Primitive Discard Accelerator. Along with a better L2 cache, this allowed GCN 4 GPUs to beat out even the most powerful previous-gen GPUs like R9 290X.

Last but not least, this newly fabricated architecture provided the much-needed die shrink for a noticeably improved power consumption. Moving from 28nm to 14nm allowed AMD to add more CUs(compute units) to stay strong in gaming+productivity performance against the competition.

It also provided support for Display Port 1.4 and HDMI 2.0b to enjoy high refresh rate gaming or home theater setup.

GCN 5

GCN 5, commonly known as Vega architecture, provided one of the best-valued GPUs in a long time. This architecture delivered GPUs like RX Vega 56 and RX Vega 64 which really gave Nvidia a run for their money.

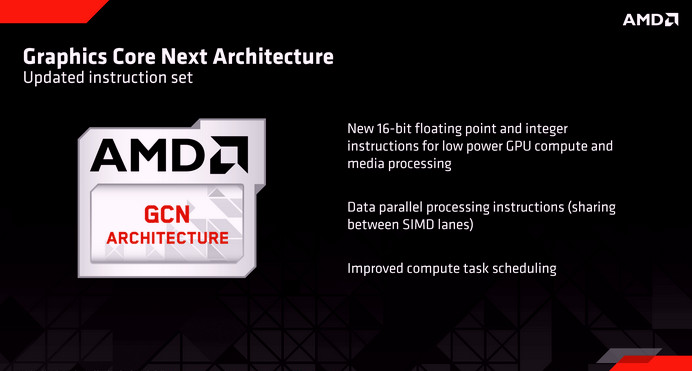

This new GCN architecture was built around NCU(Next-generation Compute Unit) which promised a much higher instruction per clock(IPC) and bandwidth. Although, this wasn’t a completely new design. It was mainly a refinement of traditional GCN CUs.

GCN 5 also uses HBM2 memory, a larger memory address, and a high-bandwidth memory controller to ensure lower latency and a mind-boggling up to 1 terabyte per second data throughput.

GCN vs RDNA: What’s the Difference?

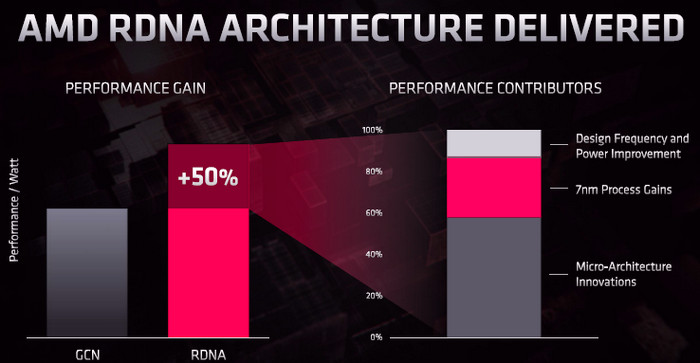

Powerful but underutilized – the best way to describe the GCN architecture. So, in 2019, after around 7 years, AMD is finally moving on from GCN to a newer and more advanced architecture. Hence, the arrival of RDNA.

From a technical perspective, it really is a much different and much more refined architecture than GCN. RDNA uses wave32, a much narrower wavefront than GCN’s wave64. Additionally, each SIMD is wider but the CUs are narrower.

Unlike GCN, two RDNA CUs work in tandem with shared local data. This effectively reduces bottleneck and boosts IPC by 4x. It also provides a significant performance and efficiency boost.

In a nutshell, RDNA is the new kid on the block who brings newer and more advanced tricks to the masses.

Read more about how to check if GPU is working properly?

Frequently Asked Questions

What is the full term of GCN?

GCN stands for Graphics Core Next.

What is the last GCN desktop GPU?

Radeon VII is the last GCN desktop GPU.

Are RDNA GPUs different than GCN ones?

Yes. RDNA is a completely different GPU architecture than GCN.

Wrapping Up

That’s about it. Hopefully, you don’t have any confusion about GCN anymore after going through this write-up.

If you have any further questions on this topic, feel free to ask them in the comment section below.

Have a nice day!