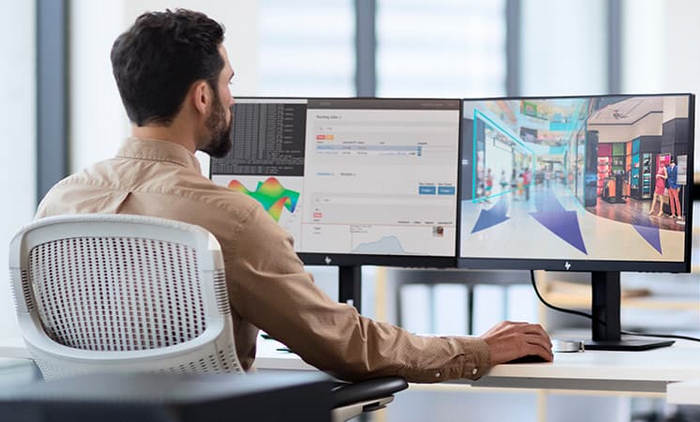

Multi-Monitor demands are becoming essential for professional work and gaming. Since ultrawide monitors are very expensive, a dual monitor setup is a viable & cheaper option to choose.

I had no choice but to use up to three monitors to function well while working or gaming. But the question is, do I need two graphics cards to use just two or more monitors side-by-side?

Let me clear the doubts with proper explanations & personal experience.

Does Dual Monitor Setup Require Two Graphics Cards?

No, You don’t need two Graphics Cards to use a Dual-Monitor setup. Almost every Graphics card has at least two or more video output ports, and the motherboards usually include at least two different ports. So, no worries if it’s just two monitors.

No, You don’t need two Graphics Cards to use a Dual-Monitor setup. Almost every Graphics card has at least two or more video output ports, and the motherboards usually include at least two different ports. So, no worries if it’s just two monitors.

However, to avoid compatibility issues, you must ensure the same port type between GPU output to monitor input.

For example, if your Graphics Card has 1 HDMI & 2 DP ports, you must ensure both of your monitors have DP ports or One with DP & another with HDMI.

Furthermore, you can additionally connect your monitors to the motherboard IO if your CPU has basic integrated HD / UHD graphics or the latest Vega / RDNA. But your windows might lag on Dual Monitor setup as basic iGPU is not powerful.

So, you don’t need an extra Graphics Card to use just two of your monitors if your monitor inputs match GPU & mainboard outputs.

Since dedicated graphics or integrated graphics is a must on a laptop. You can definitely use an extra monitor, but the limitation is the ports. You’ll hardly find a laptop with more than two display out ports. It means you can connect a windows laptop to a projector, TV, monitor & any display with HDMI, DP, or VGA port. But it’s limited to two extra monitors other than the built-in laptop display.

Pro Tip: You can use converters & adapters if your input & output ports aren’t matching each other.

What are the Benefits & Drawbacks of Using Multiple GPUs?

Having multiple GPUs in the system might give you a satisfactory vibe, but it’s not an efficient build for some reason. Although there are plenty of benefits if you utilize crossfire or SLI technology, the power consumption becomes worthless if not used properly.

The power consumption of a Graphics Card is much more than any other component in a computer. So, multiple GPUs will continuously cost a lot of electricity, requiring a more powerful PSU in your system.

Alongside power consumption, the performance of a computer is a big concern. Two different GPU drivers may collide if your system holds Graphics Cards from two different manufacturers.

You can not use SLI or Crossfire in such cases, and it becomes worthless as you can’t use multiple GPUs’ powers as one GPU.

Yes, you can use monitors on different graphics cards without any issues. But proper gaming using multiple Graphics cards isn’t possible without SLI or Crossfire.

In fact, multiple monitors connected to different types of GPUs will work unequally.

As a result, you can’t sync between GPUs & monitor refresh rates. Different Graphics Cards can satisfy the Multi-monitor need. Yet it’s not a worthy setup considering electricity cost & efficiency.

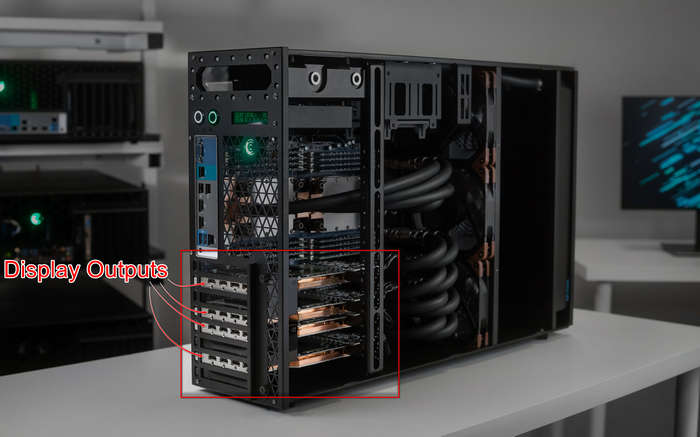

How Many Monitors Can You Use On One or Multiple GPUs?

There are certain types of possibilities you might be interested in. First, how many monitors can be connected to a single GPU or vice-versa?

The connection limit of the monitor is the maximum port count of a single Graphics Card. GPU IO ports vary over the model of GPU and vendors. But if you want to set multiple monitors with the motherboard IO ports, you must know how many displays your integrated graphics can support.

With iGPU, a CPU can usually run two monitors, but you can check the spec from the manufacturer’s site to be sure.

Alternatively, it’s possible to connect a single monitor with multiple inputs with corresponding ports of a single GPU, which doesn’t make any sense. Because you might run into problems like your game launching into the wrong monitor input, which is annoying.

However, connecting such multi-input compatible monitors with different computers or consoles is a clever choice. You can switch between rigs or devices seamlessly with this simple trick.

Next, how many monitors (maximum) can you connect by adding more Graphics Cards to the system?

As I mentioned earlier, your Graphics Card can connect monitors with as many OI ports as it has; adding more GPU on your motherboard will increase the monitor’s connectivity limit similarly. You can do this setup if you have old Graphics Cards in your collection.

And Lastly, Can you use multiple monitors with a single output from Graphics Card?

Surely you can use plenty of monitors with a single output of a graphics card, but you can not Extend your displays actually. You’ll only see the identical projection of the same output on all the displays you connect via a hub device. But you may face issues like random signal loss of monitors.

So, consider these possibilities and set up your monitors accordingly.

What Are The Problems With High-Resolution & Refresh Rates?

The quality of view highly depends on the monitor’s resolution. A 43-inch monitor will show pixelated image quality if it’s just an FHD(1080p) panel. So, big displays require higher resolution to keep the quality intact.

In the same way, the output from the graphics card must be higher, which means it has to be capable of generating such output. But what will happen if you connect 3 or 4 monitors of 4K resolution to a decent graphics card?

It may not support each monitor with 4K resolution because every port isn’t the same. Different ports have a specific output capability.

For instance, a VGA port is limited to 2048×1536 resolution, DVI is capped at 1920×1200, and the rest of the ports, like HDMI, DP, & Type-C, can support up to 10K depending on the processing power of the GPU.

Furthermore, the Refresh Rate is another significant matter to consider from a gaming perspective. High refresh rates like 144Hz, 165Hz, 200Hz, and more require a hefty amount of power from a GPU.

You can’t use high-resolution displays with high refresh rates if your GPU can’t bear the load. The different refresh rates on monitors will spoil the gaming experience severely.

FAQs

Do multiple monitors affect RAM?

Since the graphics card has individual VRAM, it won’t affect the RAM. But iGPU within an APU uses a portion of RAM as shared memory, which can partially have some effect.

Can I connect a 60Hz laptop to a 144Hz monitor?

Yes, you can connect your laptop to any refresh rate, whether higher, like 144Hz, 165Hz, or lower than 30Hz.

Which monitor size is best?

Although monitor size varies over user’s preferences, a 23-inch monitor with 1080p resolution seems right.

Are two monitors worth it?

Most professional workers prefer as well as require a multi-monitor setup to work properly. Furthermore, games are now heavily optimized for multi-monitors.

What’s better: HDMI or DisplayPort?

Display Port (DP) provides better resolution and faster connection between monitor and CPU. Plus, G-Sync support performs well on the DP port.

Final Verdict

You can inevitably use two monitors at a time with any Graphics Cards and integrated graphics. But the requirement for multiple monitors that could be more than 4 or 5 may not be possible on one GPU. Although you can increase the connection limit with the motherboard’s IO ports, 4K or high-resolution output requires more power.

Be sure to leave a comment to let us know whether you find this helpful article.